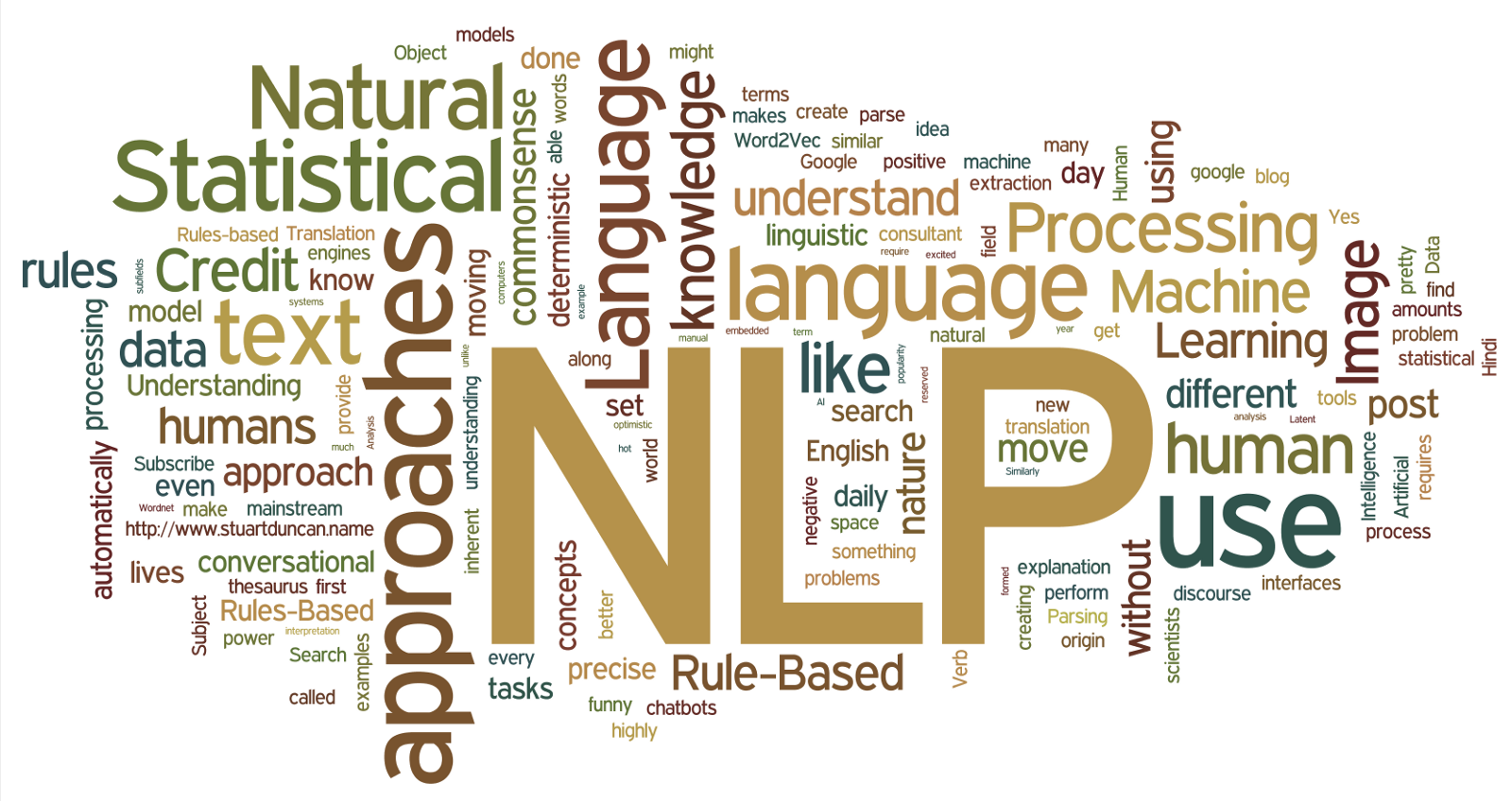

what is Natural Language Processing (NLP)?

Natural Language Processing (NLP) is a field of artificial intelligence (AI) and computational linguistics that focuses on enabling computers to understand, interpret, and generate human language in a way that is meaningful and contextually relevant. It involves the development of algorithms and models that can process large volumes of natural language data, such as text and speech, with the aim of extracting useful information, detecting patterns, and making intelligent decisions.

At its core, NLP encompasses a wide range of tasks and techniques, including:

Tokenization: Breaking down text into smaller units, such as words or phrases (tokens), to facilitate further analysis. This step is essential for tasks like parsing, part-of-speech tagging, and named entity recognition.

Part-of-Speech Tagging (POS): Assigning grammatical categories (e.g., noun, verb, adjective) to each word in a sentence. POS tagging is crucial for understanding the syntactic structure of a sentence and disambiguating word meanings.

Parsing: Analyzing the grammatical structure of sentences to determine their syntactic relationships. This involves identifying phrases, clauses, and dependencies between words, which is essential for tasks like sentence understanding and semantic analysis.

Named Entity Recognition (NER): Identifying and classifying named entities (e.g., persons, organizations, locations) mentioned in text. NER is crucial for tasks like information extraction, entity linking, and knowledge graph construction.

Semantic Analysis: Extracting the meaning and intent from text by analyzing its semantics and context. This includes tasks like sentiment analysis, topic modeling, and semantic role labeling, which help uncover underlying patterns and insights in textual data.

Machine Translation: Translating text from one language to another while preserving its meaning and context. Machine translation systems leverage NLP techniques, such as syntactic analysis and statistical modeling, to generate accurate and fluent translations.

Question Answering (QA): Generating accurate responses to user queries by understanding the meaning and intent behind the questions. QA systems use NLP techniques, such as information retrieval and text summarization, to extract relevant information from large corpora of text and provide concise answers.

Text Generation: Creating human-like text or speech output based on input data or predefined templates. Text generation models, such as language models and chatbots, use NLP techniques, such as sequence-to-sequence learning and attention mechanisms, to produce coherent and contextually relevant responses.

NLP applications are pervasive across various domains and industries, including:

- Information Retrieval: Helping users find relevant information from large collections of text, such as web search engines and document retrieval systems.

- Sentiment Analysis: Analyzing opinions, attitudes, and emotions expressed in text, such as social media monitoring and customer feedback analysis.

- Text Summarization: Generating concise summaries of long documents or articles, such as news summarization and document summarization tools.

- Speech Recognition: Transcribing spoken language into text, enabling voice-controlled systems and virtual assistants like Siri and Alexa.

- Language Understanding: Enabling human-computer interaction through natural language interfaces, such as chatbots, virtual agents, and voice-enabled applications.

In recent years, advancements in deep learning and neural network architectures have propelled NLP to new heights, leading to breakthroughs in tasks like language modeling, machine translation, and text generation. With the continuous development of NLP techniques and technologies, the future holds immense potential for further enhancing our ability to understand and interact with human language in increasingly sophisticated ways.

- Language Generation: NLP techniques enable computers to generate human-like language, ranging from simple sentences to complex narratives. Language generation models, such as OpenAI’s GPT (Generative Pre-trained Transformer) series, have demonstrated remarkable capabilities in producing coherent and contextually relevant text across a wide range of topics and styles.

Language Understanding: NLP facilitates the understanding of human language by computers, enabling them to comprehend and respond to user queries in a meaningful way. This capability powers virtual assistants, customer service chatbots, and interactive systems that provide natural language interfaces for tasks like information retrieval, task automation, and decision support.

Text Classification: NLP algorithms can categorize text documents into predefined classes or labels based on their content. Text classification is used in various applications, including spam filtering, sentiment analysis, content categorization, and document organization.

Information Extraction: NLP techniques extract structured information from unstructured text data, enabling computers to identify and capture relevant entities, relationships, and events. Information extraction is essential for tasks like data mining, knowledge discovery, and automatic database population.

Dialogue Systems: NLP powers dialogue systems that engage in conversational interactions with users, simulating human-like dialogue for purposes such as customer support, virtual tutoring, and entertainment. Dialogue systems leverage techniques like natural language understanding, generation, and context management to maintain coherent and meaningful conversations.

Multimodal NLP: With the proliferation of multimedia content, multimodal NLP integrates text with other modalities such as images, videos, and audio to enable richer and more nuanced language understanding. Multimodal NLP applications include image captioning, video summarization, and speech-to-text transcription, where text and non-textual data are jointly processed and analyzed.

Ethical Considerations: As NLP technologies become more pervasive, ethical considerations around issues such as bias, fairness, privacy, and accountability become increasingly important. Addressing these concerns requires interdisciplinary collaboration between researchers, policymakers, and industry stakeholders to ensure that NLP systems are developed and deployed responsibly and ethically.

Future Directions: The future of NLP holds promise for further advancements in areas such as contextual understanding, commonsense reasoning, and human-like language generation. Research efforts are focused on developing more robust, interpretable, and generalizable NLP models that can understand and generate language with greater accuracy, coherence, and sensitivity to context.

In summary, Natural Language Processing (NLP) is a rapidly evolving field that encompasses a wide range of techniques and applications for enabling computers to understand, interpret, and generate human language. From information retrieval and sentiment analysis to dialogue systems and language generation, NLP technologies are transforming how we interact with computers and enabling new forms of human-computer communication and collaboration. As NLP continues to advance, it holds the potential to revolutionize various aspects of society, from education and healthcare to business and entertainment, making human language more accessible, intelligible, and impactful in the digital age.